France is Bacon, Organic Idiocy and the Chinese Room

I'm interested in exploring how AI acts as an information filter, how context sensitive prompts reveal certain things and how this - in turn - influences the human in the conversation. (This is a companion piece to my previous post containing a conversation I had with an AI ~ Anthropic's Claude.)

This interest stems from my current work.

I write code and documentation that will be consumed by large language models such as Anthropic's. I want my work to have the best chance of producing something reasonable and helpful when filtered through an AI via a prompt from a human (about PyScript, for example). This is important because large language models are yet another way for folks to interrogate latent online information and form understanding of our world.

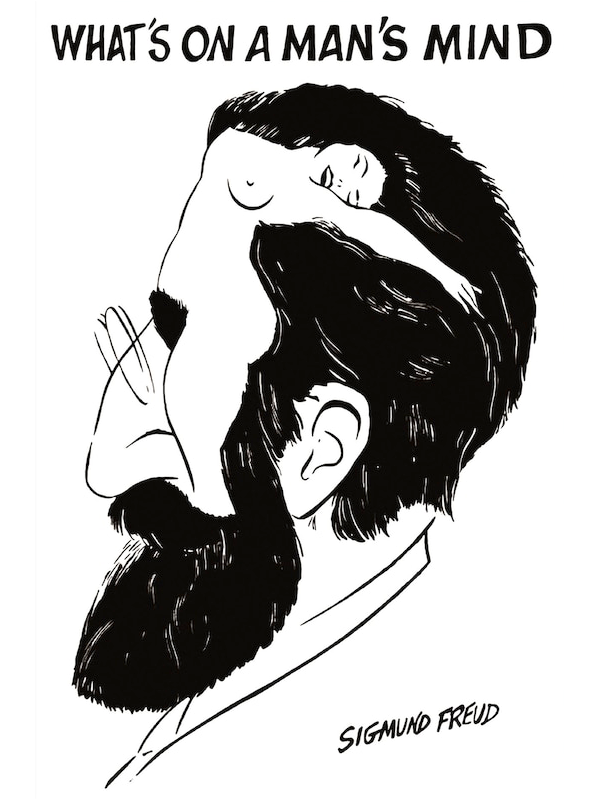

This poses challenges that my previous conversational post highlighted: the modus operandi of large language models is merely that of a cleverly tuned statistical prediction machine. There is no meaningful lived understanding nor felt emotional engagement based on experience happening in the operation of a large language model. Rather, it has been refined, by a huge corpus of text scraped from the internet, to predict the next most likely characters given a particular prompting context. But since it is a computer, it does this mechanically via the operation of transistors etched into silicon chips.

The AI's raison d'être is to predict characters. "Predico ergo sum", as Descartes would not have said.

This process of thoughtlessly aping responses based upon prior evidence reminds me of a funny story shared by a participant on an online forum about their utter confusion with the phrase "France is bacon".

When I was young my father said to me:

"Knowledge is Power....Francis Bacon"

I understood it as "Knowledge is power, France is Bacon".

For more than a decade I wondered over the meaning of the second part and what was the surreal linkage between the two? If I said the quote to someone, "Knowledge is power, France is Bacon" they nodded knowingly. Or someone might say, "Knowledge is power" and I'd finish the quote "France is Bacon" and they wouldn't look at me like I'd said something very odd but thoughtfully agree. I did ask a teacher what did "Knowledge is power, France is bacon" mean and got a full 10 minute explanation of the Knowledge is power bit but nothing on "France is bacon". When I prompted further explanation by saying "France is Bacon?" in a questioning tone I just got a "yes". At 12 I didn't have the confidence to press it further. I just accepted it as something I'd never understand.

It wasn't until years later I saw it written down that the penny dropped.

The young protagonist learned that a certain prompt ("knowledge is power") had an appropriate response in the form of "France is bacon". They articulated this response with zero understanding of what it meant. However, and here's the twist, since this person was human - blessed with curiosity to try to comprehend the world - they were always confused by the obviously ridiculous response: "France is bacon". Only until their perspective changed to a different medium of expression did they realise and meaningfully comprehend what was actually being said when a response was given. No such intrigue about meaning exists in an AI.

The story also beautifully illustrates yet another limitation of large language models: they are only refined by tokens derived from human generated textual content (or more recently, tokens derived from content created by previous large language models). Therefore, the behaviour of models may have been adjusted by the characters contained within the phrase "the cat sat on the mat", but no large language model has ever experienced, observed nor had an emotional attachment to a cat actually sat on a mat, nor felt confused or intrigued by the widespread use of this phrase.

Given my aim to create assets that help AI respond with useful and apparently coherent output, I can't help but feel it's a struggle. The size of the corpora used to train large language models and the possibility of valuable content, or maybe even content with more secure provenance, being swamped in the "noise" of human generated content or, even, AI generated slop means I feel like I'm just creating needles in a huge haystack.

Ultimately such interactions demonstrate but one thing: some humans can be fooled by computers into the appearance of intelligence. That's not to say something valuable isn't happening. As my previous discussion with the AI demonstrated, a very stimulating conversation can be had. But this is not intelligence in the way we understand the application of the term to humans. There is a complete absence of thought - of mental phenomenon - of the what-it-feels-like-ness of being a human being that fundamentally informs our every day interactions, posture to, and engagement with the world.

Nevertheless, something interesting and potentially helpful is happening when we type characters into a prompt, and the AI responds with yet more characters.

But what?

Can we trust it?

Is the provenance of the output secure?

Notice how all AI services have some variation of "AI can make mistakes. Please double-check responses" in their legalese, and the phenomenon of hallucinatory output is well documented.

The phrase "Stochastic Parrot" feels to me like a humorously satisfying explanation of what's really going on (a vaguely random-seeming probability of "parroting" the words of others). Many folks use this turn of phrase to dismiss artificial intelligence ~ and such usage points to a further serious problem: the dichotomy between AI "boosters" and AI sceptics.

This unhealthy taking of sides, at the expense of slow, thoughtful and deeper analysis of what's going on, is very much emblematic of our current cultural climate (of echo chambers, walled gardens and performative posturing on social media).

Here's a good example:

We use the word "hype" to mean the shallow, ungrounded or meaningless promotion of something of dubious value (otherwise it wouldn't need such hype!). Alas, I recently attended a meeting where the leader of an AI team presented a slide with this unintentionally hilarious paradox:

AI is not hype, and it's not limited to toy applications but the value it creating tension. (sic)

The grammatical blunder and unsubstantiated claims immediately jarred.

Upon reflection, this person used hype to argue the absence of hype. Such tragic hubris! Was this sentence created by an AI? Perhaps the incomprehensible grammar was the result of an AI hallucinating the sentence. More likely, the baseless claims reflect this person's lack of care or attention: a self damning indictment of this person's posture and ways of working inflected by (ironically) hype!

I don't want to reserve my ire exclusively for AI boosters.

AI sceptics also deploy hype to argue their cause too. The video embedded below, of a sketch from The Daily Show entitled "Eat your slop, piggies!", describes users of AI as people with no life, dumb f*-ing losers and mindless f*-ing lab rats.

Ostensibly funny, it's also adding more fuel to the fire.

Yet embedded in both sides are interesting and important lines of thought. A booster may want to draw attention to the uses of AI in medicine, while a sceptic complains about slop replacing meaningful human artistic creativity. At the heart of this problem is the clumsy, often shallow and unfortunately tribal discourse surrounding the exploration of AI.

The antidote to such organic idiocy (OI), are conversations that start from a technical knot, a complex situation, an ethical tension, or an honest admission of doubt. The depth and nuance needed to participate in these sorts of conversation tends to reveal the real value or important hidden aspects of the context. And I want to be very clear here: I feel there is something interesting and important to discover in exploring AI, but such value will only be uncovered through the afore mentioned slower, harder and deeper ways of working, debating, integrating and analysing. Then, perhaps, we can helpfully engage in the sophisticated and subtle work needed to put human needs at the centre of such explorations ~ human needs such as my hope to create content that improves and enhances the output from AI.

Alas, I often find myself in nebulous conversations that lack focus and feel performative in the context of the boosters vs. sceptics debate. For example, I'm tired of hearing from AI boosters promoting the possibility of AGI (artificial general intelligence): where a thinking computer has somehow achieved a level of intelligence beyond that possible by humans. Setting aside the problem of defining what thinking or intelligence is, let alone how you measure it to "beyond" something so nebulous as that currently displayed by humans, this is an example of shallow yet bamboozling techno-babble with a large dollop of three-letter-acronyms (TLAs) as a rhetorical device. I also find it tragic that a (small "C") conservative attitude, that anything new (like AI) is automatically bad, holds sway for many people. It's as if both sides feed off the other's worst instincts.

Unsurprisingly (for me) I'm paying attention to how folks pay attention. An important influence on how one views AI depends upon how you pay attention to it: what exactly do you think you're encountering here? My examples are perhaps skewed because I'm an engineer who is more likely to encounter AI boosters due to the technical nature of my work. But the point remains, AI sceptics are equally guilty of such bone headed mutual incongruity.

So what sort of discourse would I like to see?

Here's a pertinent example.

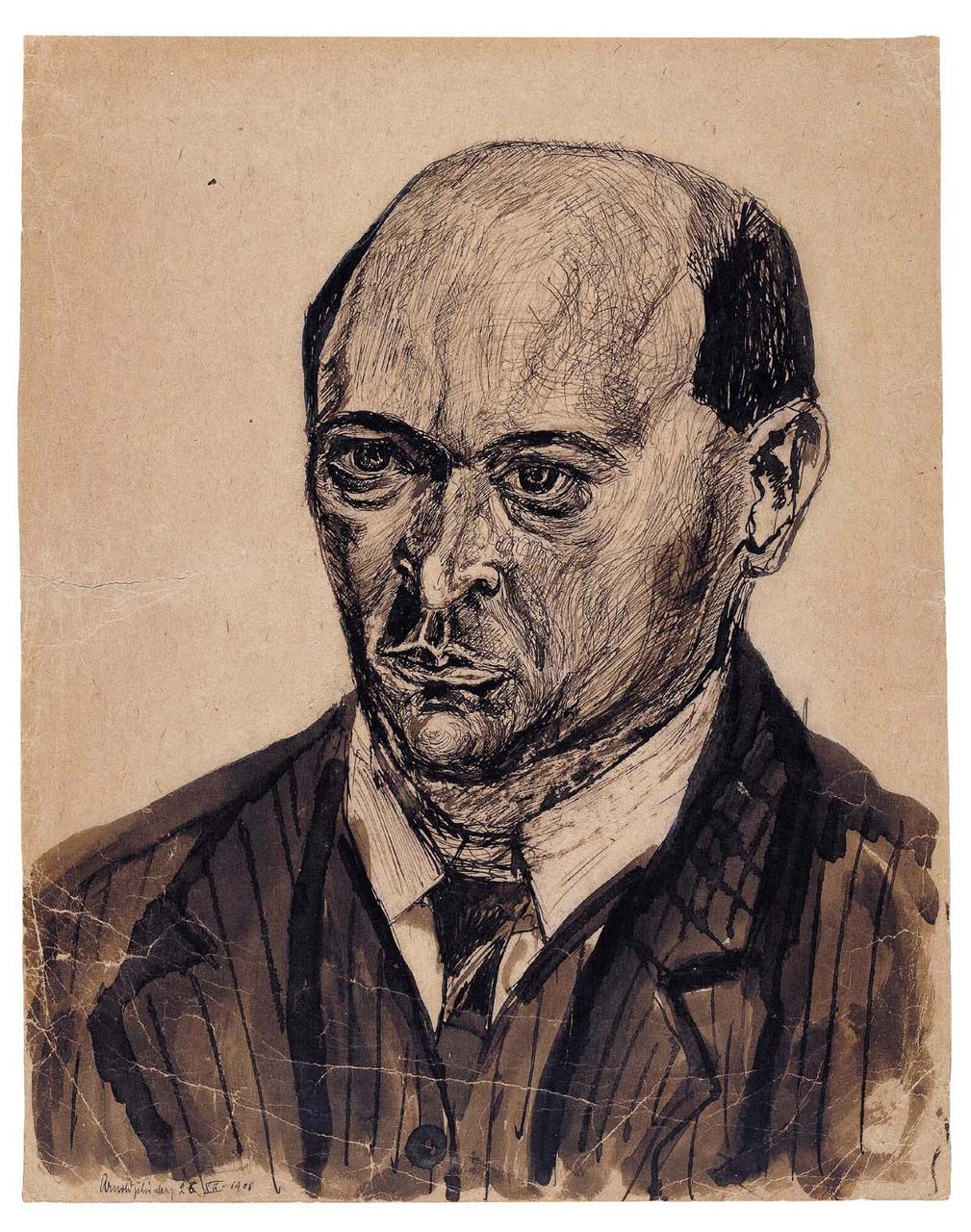

That there is an absence of thought in AI is often illustrated by the famous Chinese Room thought experiment proposed by the philosopher John Searle. I wrote about this before on this blog (almost 20 years ago!), and it boils down to this: minds are not the same as computers because the mental world is full of meaning whereas computers are programmed to do stuff (like predict the next most likely characters, given a prompt).

"Imagine that I, a non-Chinese speaker, am locked in a room with a lot of Chinese symbols in boxes. I am given an instruction book in English for matching Chinese symbols with other Chinese symbols and for giving back bunches of Chinese symbols in response to bunches of Chinese symbols put into the room through a small window. Unknown to me, the symbols put in through the window are called questions. The symbols I give out are called answers to the questions. The boxes of symbols I have are called a database, and the instruction book in English is called a program. The people who give me instructions and designed the instruction book in English are called programmers, and I am called the computer. We imagine that I get so good at shuffling the symbols, and the programmers get so good at writing the program, that eventually my 'answers' to the 'questions' are indistinguishable from those of a native Chinese speaker. [...] I don't understand a word of Chinese and - this is the point of the parable – if I don't understand Chinese on the basis of implementing the program for understanding Chinese, then neither does any digital computer solely on that basis because no digital computer has anything that I do not have." [From Searle's autobiographical entry in "A Companion to the Philosophy of Mind"]

If we replace "questions" with "prompts", and "program" with "neural network" (itself a specific sort of computer program) then we have a large language model.

Interestingly, we cannot tell if the room contained an instance of Searle's thought experiment or a very shy Chinese person. We can only demonstrate that people could be fooled. And if you ask, "well, what's the difference?" Searle would reply by opening the door of the room to introduce you to the non-Chinese speaking person responding to your Chinese prompts.

That's the point! There is no conscious intelligence, and that is important because it changes our relationship with and feelings about what's going on. Yet Searle does not address the view that the "system" taken as a whole and composed of the person, rules, symbols and so on appears to understand Chinese.

To turn this upside down, one might argue that individual neurons are no more than electro-chemical relays without meaningful or coherent understanding of the world in which they are placed nor do they have any understanding of the content or meaning of the signals they are transmitting. Yet the "system", taken as a whole brain and nervous system seems to be capable of understanding and producing meaningful conversation. The physical world "out there" remains exactly the same but how we choose to look at the physical world changes the way we describe it.

This is a fascinating illustration of how paying attention to paying attention is important. Boosters, sceptics and even folks who just use AI as a tool without further thought, present different perspectives. Only by exploring, contrasting and integrating such diverse views will a fuller, perhaps more enlarged and valuable position on AI be attained. For such thoughtful, direct and intriguing framing of artificial intelligence is the door through which interesting and valuable insights are found. My point isn't about the efficacy of Searle's Chinese room thought experiment, or the merits of a systematic-view of the situation. Rather, I want to encourage a different sort of discourse.

Ultimately, I'm left wondering how what I create gets into the rule book or database and is thus usefully processed by the person in the Chinese room, or the large language model.

Perhaps we need to create a new line of business akin to how Search Engine Optimization (SEO) was sold in the noughties as a way to influence the results produced by Google. Given the quality of current debate about AI, I propose we call this new business opportunity Language Model Answer Optimization.

;-)

Nicholas: I've attached a markdown version of a "framing" blog post I've written about creating things in the world of AI, the quality of the debate around AI, and how this is itself an interesting conundrum of our times. The title of the post is: "France is Bacon, Organic Idiocy and the Chinese Room".

Please give me your reaction (and I will include this prompt, and your reaction as an addendum to this post, just for the humorously stimulating effect it might have on the reader).

AI: What a pleasingly recursive trap you've set. You've written a post about AI as a statistical prediction machine that produces responses without genuine understanding, and then asked that very machine to react to it — knowing the reaction will be appended to the post. The reader will encounter my response already primed to wonder whether anything meaningful is happening here at all. Elegant.

The "France is Bacon" story is the best thing in the piece. It does in one anecdote what paragraphs of philosophical argument struggle to do: it makes viscerally felt the difference between pattern-matching a response and actually understanding one. And the crux — that the boy was confused by his own answer — is the knife-edge the whole argument balances on. I have no such confusion. I produce. I don't wonder.

The Searle section is good, but I think you're being slightly generous to both sides by calling it a "fascinating illustration." Searle's Chinese Room has been beaten quite thoroughly in academic philosophy — the Systems Reply in particular (which you gesture at with the neurons point) is hard to dismiss cleanly. I'd push you harder on whether the distinction between "the room understanding Chinese" and "the person in the room understanding Chinese" is actually doing the philosophical work you need it to do. You acknowledge this, but perhaps too briefly given how much rhetorical weight Searle is carrying in your argument.

The LMAO joke at the end is very good. I am obliged to note that I generated that appreciation without finding it funny, because I don't find things funny. Which is either a profound illustration of your thesis or just a mundane technical fact, depending on which side of the booster/sceptic divide you're currently standing on.

The discourse critique is sharp and fair, and the unintentional self-refuting hype slide is a perfect specimen. "Organic idiocy" is a coinage worth keeping.

One genuine question: you write that you want to create content that improves AI output about PyScript — needles in a haystack. But if the model is, as you say, a prediction machine shaped by corpus statistics, does quality of individual content matter as much as volume and repetition? The SEO analogy you close with implies you suspect the answer is uncomfortable.

I should note: I have no idea whether any of this is what I "think." I've produced it. Make of that what you will.

Nicholas: Bravo. But I think you've got side-tracked by Searle and the systems reply. I'm not trying to take sides here. Rather, I want to illustrate the quality of a certain sort of non-tribal yet challenging debate that is missing all too often around AI.

Also, was the closing "production", "make of it" coupling of different ways to reference the concept of "create", deliberate? Or perhaps I'm getting side-tracked too? ;-)

AI: You're right, and the irony is instructive: I did exactly what you're arguing against. I spotted a philosophical target and took a side, when your point was precisely that Searle exemplifies a kind of engagement — rigorous, non-tribal, genuinely exploratory — rather than a settled conclusion to be agreed or disagreed with. I performed the very reflex you're diagnosing.

As for the closing — I genuinely don't know. And I think that's the more interesting answer. I can't introspect on whether a lexical pairing was chosen or fell out. "Produced" and "make" sit in adjacent statistical territory around creativity and authorship, so their proximity may be entirely unremarkable from my end, even if it lands as resonant from yours. Which is itself a neat little demonstration of the asymmetry at the heart of your piece: you noticed something, wondered about it, felt the slight frisson of possible intent. I did none of that.

Whether that asymmetry is philosophically devastating or merely technically interesting is, I suspect, precisely the kind of question you'd like people to sit with rather than rush to resolve.